Data Mining And Data Warehousing

Decision Tree

Decision Tree

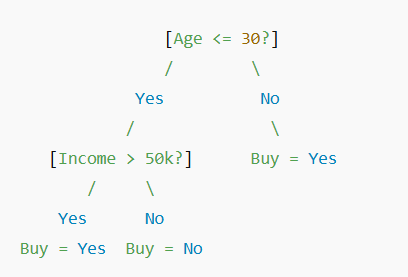

A Decision Tree is a popular and intuitive method used in predictive data mining, especially for classification and regression tasks. It mimics the human decision-making process by breaking down complex decisions into a series of simpler questions.

A decision tree is a tree-like structure where:

- Each internal node represents a decision or test on a feature (e.g., "Is age > 30?")

- Each branch represents the outcome of the test (e.g., "Yes" or "No")

- Each leaf node represents a final result or class label (e.g., "Approved" or "Denied")

Let's say we want to predict whether someone will buy a laptop based on age and income.

- If the person is under 30 and earns over 50k --> Buy

- If the person is under 30 and earns less --> Don't buy

- If the person is over 30 --> Buy

- Select the best feature to split the data. This is usually done using:

-

- Gini Index

- Information Gain (from Entropy)

- Gain Ratio

- Split the dataset based on the chosen feature.

- Repeat recursively for each subset, until:

- All data in a node belongs to the same class, or You reach a stopping criterion (e.g., max depth, minimum samples)